microgpt on the ESP32 – but... why?

Then I remembered that Andrej Karpathy had dropped microgpt a couple of weeks ago, and thought it might be fun to try and get a GPT running on the ESP32. I've got a couple of them lying around from a few other tinkering projects, as well as a functioning ESP32 Rust project to base it on.

I am very much the dog-on-computer

(I have no idea what I'm doing)

With the blog post and my other project as a starting point, it was, however, pretty easy to get to a functional port of microgpt training and then generating a continuous stream of names on the ESP32!

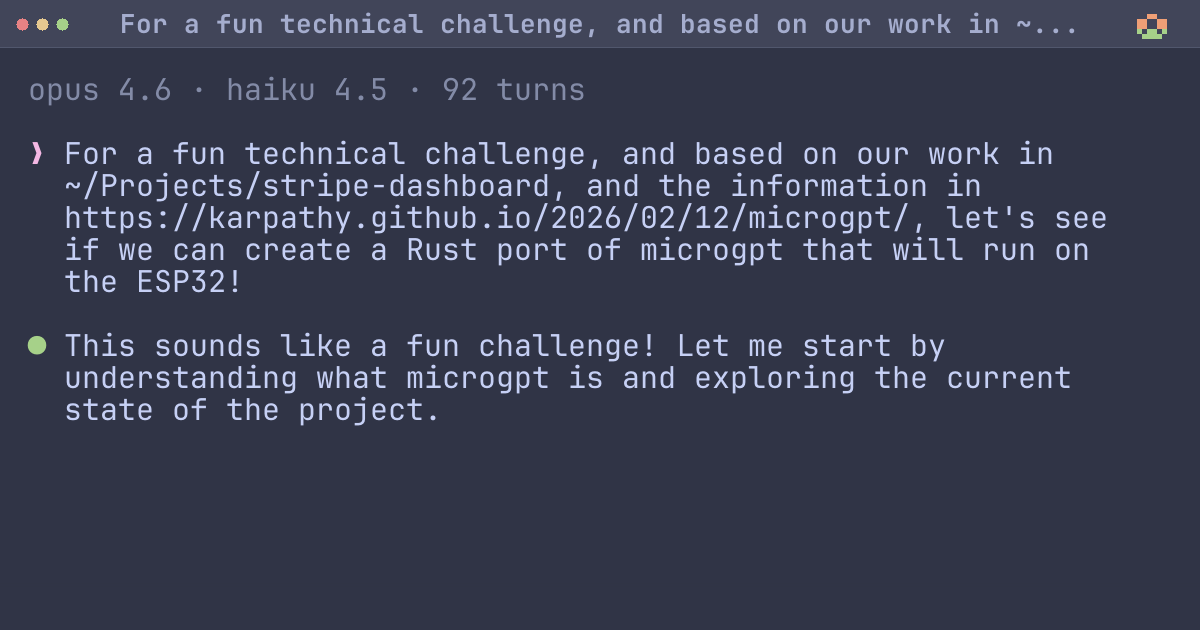

The code is here, and the Claude session that produced the first functioning variant of the project is below:

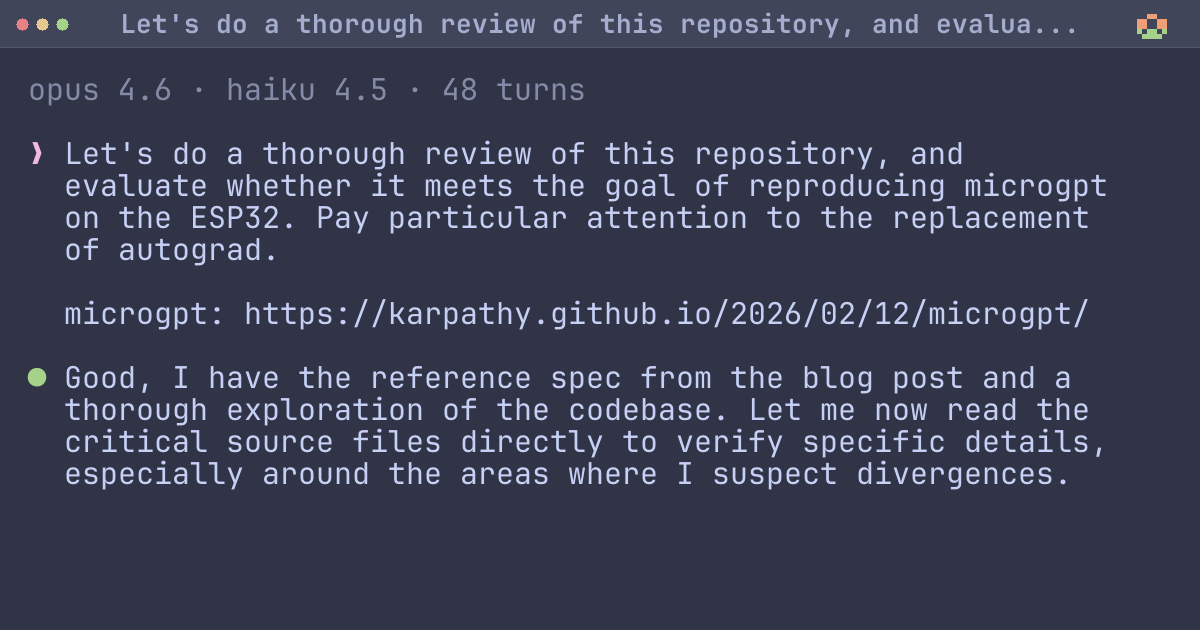

I also had a fresh session of Claude conduct a review, which spotted that the implementation was missingRMSNorm, and filled in the gaps:#What's the point?

Well, I learned that training a language model on an ESP32 is something that can be done. I did not know that yesterday. I also found this is not by any means the first or even most interesting attempt. David Bennett used a slightly larger ESP32 variant (1MB of RAM, still minuscule) to spit out 20 tokens a second of much more recognizable "LLM" output. Two years ago!

I think I've learned a little more than I'd have learned reading a blog post from someone else describing how they'd done it. I did a little post-Claude tinkering with stuff I'd remembered from the last ESP32 project (core pinning, RNG).

Does this magically make me able to understand the math? No, I'd have to use my brain more for that, but I've read some of the code, and have given Karpathy's post a closer read than I might have done otherwise.

This was a shower thought that was turned into a working demo. A year ago, I'd have forgotten about it and moved on, or jotted something down in a list of rainy day projects that I would realistically never get around to.

Some people will find this unsatisfying, maybe even a quintessential example of the missed learning opportunity that happens when someone uses LLMs. I'm not sure I even disagree in principle. I just know that I was very unlikely to ever even attempt to do this. There are lots of random ideas that I'll never get around to.

The space of possible ideas is large, and time is finite. Maybe noodling on this for an hour or two will open me up to other possibilities. Too early to tell.

I played video games a lot as a teenager, and watched television, and both of these were going to rot brains. Brains are more resilient than they seem to get credit for.

There is currently no comments system. If you'd like to share an opinion either with me or about this post, please feel free to do so with me either via email (ross@duggan.ie) on Mastodon (@duggan@mastodon.ie) or even on Hacker News.

Changelog

- — Fixed a typo.